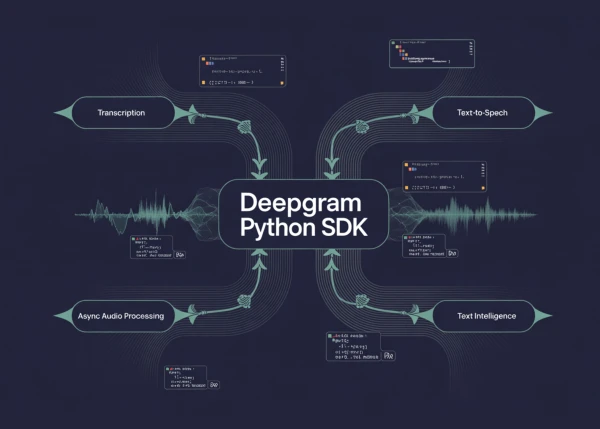

In this hands-on tutorial, we build an end-to-end voice AI workflow using the Deepgram Python SDK, covering transcription, text-to-speech, async audio processing, and text intelligence. After setting up authentication and initializing both synchronous and asynchronous Deepgram clients, we work with real audio data to explore the SDK's capabilities.

First, we transcribe audio from a remote URL using the nova-3 model with smart formatting, diarization, and filler words enabled. The output includes a full transcript, confidence scores, word-level timestamps, and speaker labels. We then transcribe a local audio file, enabling paragraph formatting and AI-powered summarization to get a more readable and analysis-friendly result.

To demonstrate scalability, we run both URL-based and file-based transcriptions in parallel using asyncio.gather. This asynchronous approach reduces total processing time and prepares the pipeline for production use.

The tutorial also covers text-to-speech generation. We create an MP3 audio file from a sample text using the aura-2-asteria-en voice, then compare multiple TTS voices — Asteria, Orion, and Luna — to showcase voice variety.

Finally, we apply text intelligence features to analyze a sample review. Sentiment analysis reveals positive sentiment, topic extraction identifies "customer service" and "product quality" as key themes, and intent detection captures the user's intent to purchase. This demonstrates how Deepgram’s SDK unifies transcription, speech synthesis, and natural language understanding in one Python environment.

All code examples are self-contained and ready to adapt for real-world applications such as call center analytics, voice assistants, and content generation.