Imagine being able to read someone's thoughts directly from their brain activity. That is the promise of a new deep learning framework called NeuralSet, which can decode linguistic features from magnetoencephalography (MEG) signals with remarkable accuracy.

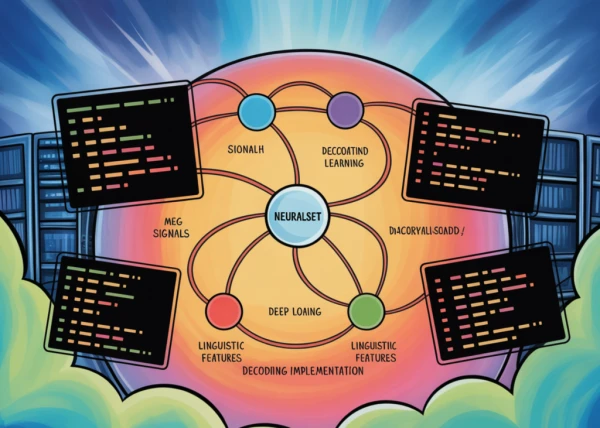

Developed by researchers at the intersection of neuroscience and artificial intelligence, NeuralSet employs a specialized architecture that learns to map brain signals to semantic representations of words and sentences. The system processes MEG data—a non-invasive technique that measures magnetic fields generated by neural activity—and predicts which linguistic elements a person is perceiving or producing.

"This is a significant step toward practical brain-computer interfaces that could restore communication for people with paralysis or locked-in syndrome," the researchers noted.

The model works by first extracting features from raw MEG signals using convolutional neural networks, then aligning those features with a language model's semantic space. In experiments, NeuralSet successfully predicted nouns, verbs, and even the meanings of short phrases from brain activity patterns.

While still early-stage, this research showcases how deep learning can bridge the gap between neural recordings and human language. The code for NeuralSet has been open-sourced, allowing other teams to replicate and build upon the findings.

This breakthrough could eventually lead to communication devices that translate thoughts into text in real time, offering new hope for patients with severe motor disabilities.