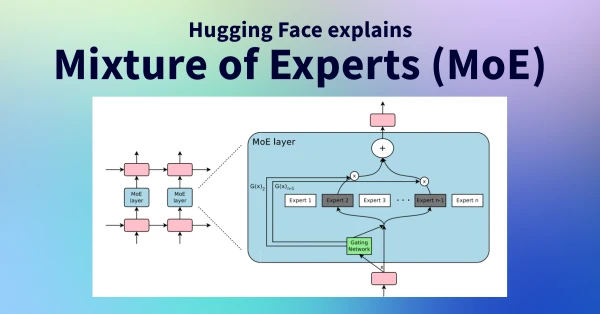

Mixture of Experts (MoE) is a neural network architecture that divides the model into multiple specialized sub-networks, called "experts," each trained on different aspects of the data. A gating mechanism selects which experts to activate for a given input, allowing the model to scale to enormous sizes while keeping computational costs lower than traditional dense models. This technique has been pivotal in state-of-the-art language models like Mixtral 8x7B, enabling high performance without full activation of all parameters. MoE improves efficiency and specialization, making it a cornerstone of modern AI systems.

Demystifying Mixture of Experts: A Key AI Architecture

AI

April 26, 2026 · 4:37 PM