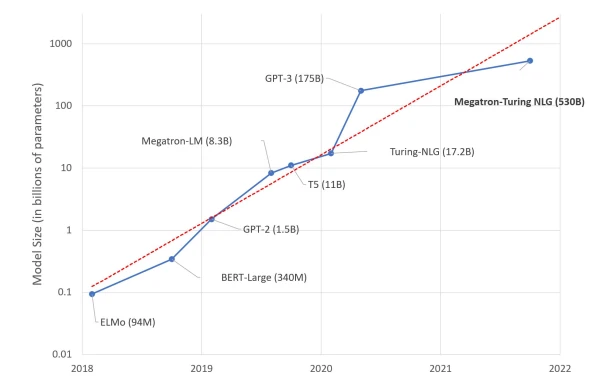

A few days ago, Microsoft and NVIDIA unveiled Megatron-Turing NLG 530B, a Transformer-based model touted as "the world’s largest and most powerful generative language model." While this showcases impressive engineering, I'm not convinced bigger is better. Here's why.

This is Your Brain on Deep Learning

The human brain contains roughly 86 billion neurons and 100 trillion synapses, not all dedicated to language. Interestingly, GPT-4 is expected to have about 100 trillion parameters. Should we really be building language models the size of a brain? Our intuition says something doesn't add up.

Deep Learning, Deep Pockets?

Training a 530-billion parameter model requires hundreds of DGX A100 servers, each costing $199,000. Add networking and hosting, and the total approaches $100 million. Which organizations have use cases justifying that expense? Very few. So who are these models for?

That Warm Feeling is your GPU Cluster

Each DGX server consumes up to 6.5 kilowatts, plus cooling. A 2019 study found training BERT (340 million parameters) emits as much CO2 as a trans-American flight. What about Megatron-Turing? These numbers are hard to ignore.

So?

Am I excited by Megatron-Turing? No. Is the marginal benchmark improvement worth the cost, complexity, and carbon footprint? No. Does building these huge models help organizations adopt machine learning? No. I'll leave mega-models to marketing and tech supremacy.

Instead, here are practical techniques for high-quality solutions:

Use Pretrained Models

Start with existing models for your task (e.g., summarizing English text). Test a few on your data; if results are acceptable, you're done. If you need more accuracy, fine-tune.

Use Smaller Models

Pick the smallest model that meets your accuracy needs. It predicts faster and requires fewer resources. Examples: SqueezeNet (50x smaller than AlexNet) and DistilBERT (40% smaller, 60% faster, 97% of BERT's understanding).

Fine-Tune Models

Rarely train from scratch. Fine-tuning a pretrained model is faster and cheaper.