A new open-source implementation called kvcached demonstrates how elastic KV-cache memory allocation can significantly improve GPU utilization for large language model (LLM) inference. Built on top of vLLM, kvcached dynamically allocates and releases key-value cache memory based on real-time workload demands, addressing inefficiencies in static allocation strategies.

In a detailed tutorial, developers walk through setting up the environment, deploying lightweight Qwen2.5 models via an OpenAI-compatible API, and simulating bursty workloads to compare memory usage between elastic and static allocation. The experiments use concurrent request waves separated by idle periods, mimicking real-world traffic patterns where requests arrive in bursts.

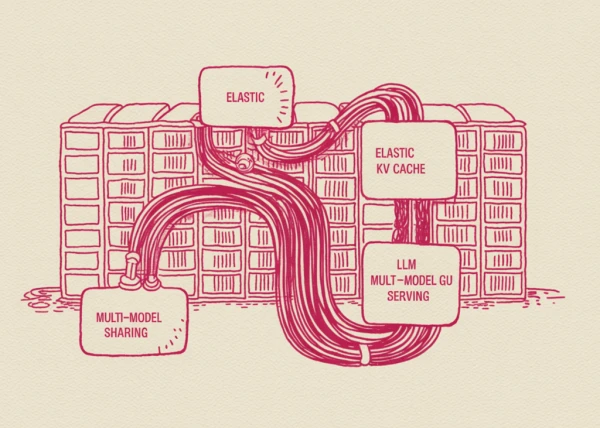

Key findings show that kvcached’s dynamic approach reduces peak VRAM consumption and allows memory to be reclaimed during idle times, which is impossible with static allocation. The implementation also supports multi-model GPU sharing, where memory flexibly shifts between active models in real time.

The tutorial includes code snippets for launching vLLM servers with kvcached enabled, monitoring VRAM usage via pynvml, and running bursty workload generators. The project aims to make LLM serving more cost-effective by maximizing hardware utilization, especially in environments with variable request rates.

For developers interested in optimizing inference infrastructure, the full guide provides hands-on steps to reproduce the experiments and compare performance metrics.