A few weeks ago, Habana Labs and Hugging Face announced a partnership to accelerate Transformer model training. Habana Gaudi processors offer up to 40% better price performance than GPU-based Amazon EC2 instances. This hands-on guide shows you how to set up a Gaudi instance on AWS and fine-tune a BERT model for text classification.

Setting Up a Habana Gaudi Instance on AWS

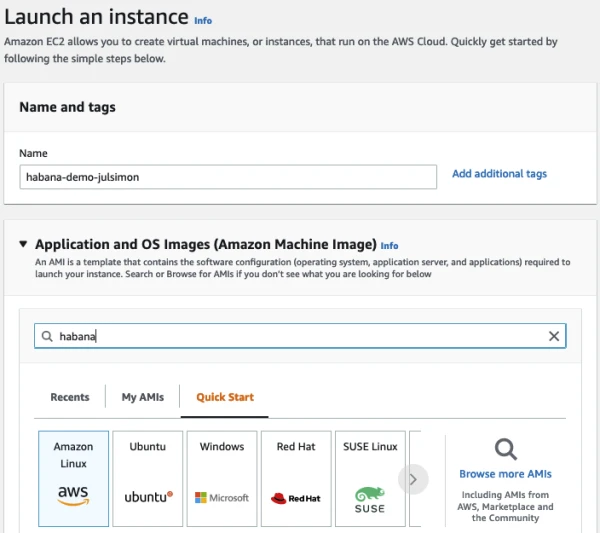

The easiest way to use Gaudi accelerators is via Amazon EC2 DL1 instances, which come with 8 Gaudi processors each. Launch a DL1 instance using the Habana Deep Learning AMI (Ubuntu 20.04) from the AWS Marketplace. Key steps:

- In the EC2 console, choose the DL1 instance type (dl1.24xlarge).

- Select an existing key pair or create one for SSH access.

- Allow incoming SSH traffic (restrict source IP in production).

- Increase storage to at least 50 GB.

- Assign an IAM role if needed (not required for this demo).

- Use Spot Instances to save up to 70% on costs ($3.93/hr instead of $13.11/hr).

- Launch the instance and SSH into it.

Inside the instance, pull the Habana PyTorch Docker container:

docker pull vault.habana.ai/gaudi-docker/1.5.0/ubuntu20.04/habanalabs/pytorch-installer-1.11.0:1.5.0-610

Run the container interactively:

docker run -it --runtime=habana -e HABANA_VISIBLE_DEVICES=all -e OMPI_MCA_btl_vader_single_copy_mechanism=none --cap-add=sys_nice --net=host --ipc=host vault.habana.ai/gaudi-docker/1.5.0/ubuntu20.04/habanalabs/pytorch-installer-1.11.0:1.5.0-610

Fine-Tuning a Text Classification Model

Inside the container, clone the Optimum Habana repository:

git clone https://github.com/huggingface/optimum-habana.git

cd optimum-habana

pip install .

cd examples/text-classification

pip install -r requirements.txt

Now fine-tune BERT on the MRPC task:

python run_glue.py \

--model_name_or_path bert-large-uncased-whole-word-masking \

--gaudi_config_name Habana/bert-large-uncased-whole-word-masking \

--task_name mrpc \

--do_train \

--do_eval \

--per_device_train_batch_size 32 \

--learning_rate 3e-5 \

--num_train_epochs 3 \

--max_seq_length 128 \

--use_habana \

--use_lazy_mode \

--output_dir ./output/mrpc/

Training completes in about 2 minutes 12 seconds, achieving an F1 score of 0.8968 and accuracy of 0.8505. Performance can improve with more epochs.

Important: Terminate your EC2 instance after training to avoid charges. The combination of Transformers, Habana Gaudi, and AWS Spot Instances offers a powerful, cost-effective solution for training NLP models.

For questions or feedback, visit the Hugging Face Forum.

Reach out to Habana for more details.