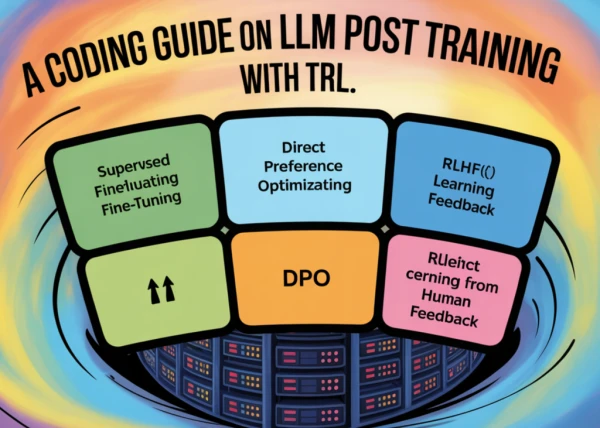

This article provides a comprehensive guide on post-training large language models (LLMs) using the TRL library. It covers the entire pipeline from supervised fine-tuning (SFT) to advanced alignment techniques like direct preference optimization (DPO) and group-relative policy optimization (GRPO). The guide includes step-by-step coding instructions, best practices for dataset preparation, and tips for achieving robust reasoning capabilities.

From SFT to DPO and GRPO: A Practical Guide to LLM Post-Training with TRL

AI

May 2, 2026 · 1:33 AM