NVIDIA's Isaac platform is bridging the gap between virtual simulation and real-world deployment for healthcare robots, enabling developers to train and test robotic systems in digital twins before they ever touch a patient.

"Simulation allows us to iterate rapidly without risk to patients or expensive hardware," said Dr. Amelia Chen, lead researcher at the Robotics Lab at Stanford Medicine, which has been using Isaac Sim to develop a robotic assistant for minimally invasive surgery.

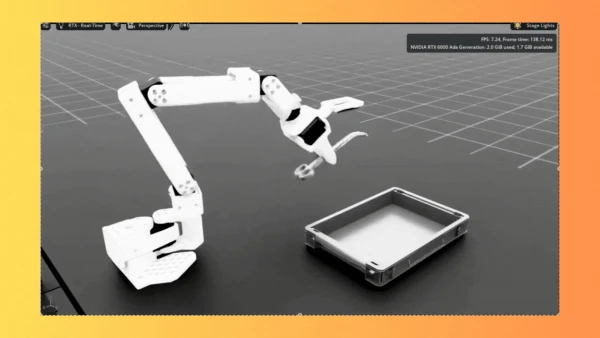

The platform combines high-fidelity physics simulation with AI-driven perception, allowing robots to learn tasks like surgical suturing, patient repositioning, and autonomous navigation in hospital corridors. Once trained in simulation, the neural network policies transfer directly to physical robots, reducing development time from years to months.

Key components include:

- Isaac Sim: A virtual environment that mimics real-world physics, lighting, and sensor noise.

- Isaac GEM: Hardware-accelerated AI models for object detection, grasp planning, and movement prediction.

- Isaac ROS: Optimized ROS 2 packages for seamless integration with real robots.

Healthcare robotics startups are already leveraging the suite. MedRobo, a Berlin-based company, recently demonstrated a robotic arm that can autonomously prepare medication trays in hospital pharmacies—trained entirely in simulation. The company reported a 90% reduction in development costs.

But challenges remain. Regulatory hurdles, patient safety concerns, and the need for robust fail-safes mean widespread deployment is still years away. Still, NVIDIA's Dr. Mark Patel, director of healthcare AI, is optimistic: "Simulation-to-real is the only scalable path to getting reliable robots into every hospital."