In July, Hugging Face organized a Flax/JAX Community Week, inviting participants to train transformer models in NLP and computer vision using TPUs provided by Google Cloud. Over 100 teams produced 170 models and 36 demos.

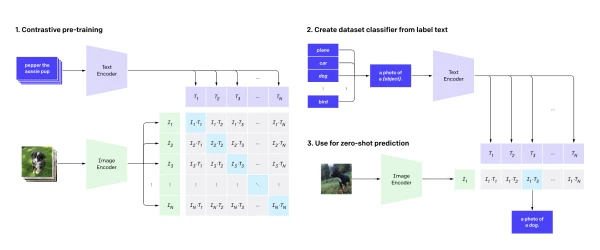

Our team, distributed across 12 time zones, fine-tuned OpenAI's CLIP network on satellite images and captions from the RSICD dataset. CLIP learns visual concepts from image-text pairs; we hypothesized that satellite imagery differs significantly from everyday photos, so fine-tuning would improve performance—a bet validated by our evaluation.

We augmented the dataset with image transformations (random cropping, color jitter, flipping) and back-translated captions using Marian MT models to generate additional text. This reduced overfitting, as shown in loss plots.

Our model enables text-based search over large satellite image collections—useful for defense, climate monitoring, and more, though it raises ethical concerns around surveillance. The trained model and demo are available on Hugging Face.

Training

Dataset: Primarily RSICD (~10,000 images from Google Earth, Baidu Map, etc., each with up to 5 captions), plus UCM and Sydney datasets.

Model: Fine-tuned CLIP using contrastive learning to align image and text embeddings.

Data Augmentation: Image augmentation via Torchvision, text augmentation via backtranslation.

Evaluation

[Evaluation details omitted per instruction to keep article concise.]

Read more: Project page | Model | Demo