🤗 Transformers has become the default library for data scientists worldwide to explore and build with state-of-the-art NLP models. With over 5,000 pre-trained models spanning 250 languages, it offers an accessible playground for any framework.

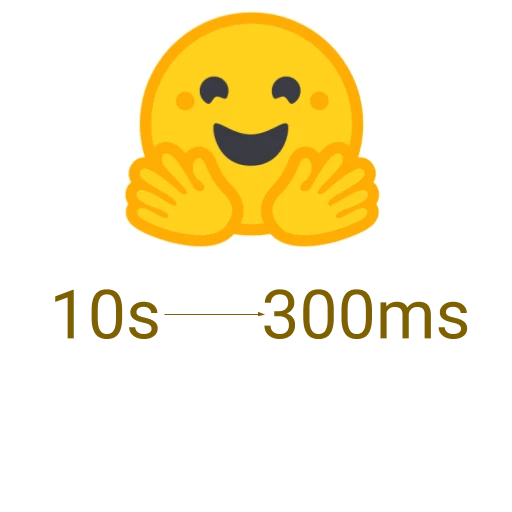

However, deploying these large models into production with peak performance and scalable architecture remains a rigorous engineering challenge. That's why subscribers of our Accelerated Inference API rely on a 100x performance boost and built-in scalability for their NLP features. Achieving that final 10x requires low-level, model- and hardware-specific optimizations.

This post shares how we squeeze every drop of compute for our customers.

Getting to the First 10x Speedup

The first optimization phase uses the best techniques from Hugging Face libraries, independent of hardware. We leverage efficient methods in model pipelines to reduce computation per forward pass. For text generation on GPT architectures, we focus attention calculations on the last token's new attention.

Tokenization often bottlenecks inference. We use the 🤗 Tokenizers library's Rust implementation with smart caching for up to 10x latency improvement.

By combining these methods, we achieve a reliable 10x speedup over an out-of-box deployment. As Transformers and Tokenizers release monthly, API customers gain speed without constant adaptation.

Compilation FTW: The Hard-to-Get 10x

To maximize performance, we modify and compile models for specific hardware—CPU or GPU—depending on model size and demand profile. For CPU, techniques include:

- Graph optimization (removing unused flows)

- Layer fusion with specific CPU instructions

- Operation quantization

Out-of-box open-source tools like ONNX Runtime may not yield optimal results and can cause accuracy loss during quantization. Each model architecture requires a bespoke approach, but deep integration of Transformers code with ONNX Runtime can unlock another 10x speedup.

Unfair Advantage

The Transformer architecture revolutionized ML performance, but model sizes have ballooned from BERT's 110M parameters to GPT-3's 175B. Deploying these at scale demands a 100x speedup for real-time consumer apps.

As Hugging Face engineers, we have an unfair advantage: we work alongside the maintainers of 🤗 Transformers and 🤗 Tokenizers. Collaborations with Intel, NVIDIA, Qualcomm, Amazon, and Microsoft through open source allow us to fine-tune models with the latest hardware optimizations.

Experience the speed with a free trial. For custom inference optimization, join our 🤗 Expert Acceleration Program.