Hugging Face now offers a streamlined solution for deploying embedding models through its Inference Endpoints service. This new capability allows developers to quickly set up and manage embeddings—numerical representations of text—for search, clustering, and recommendation systems without handling underlying infrastructure.

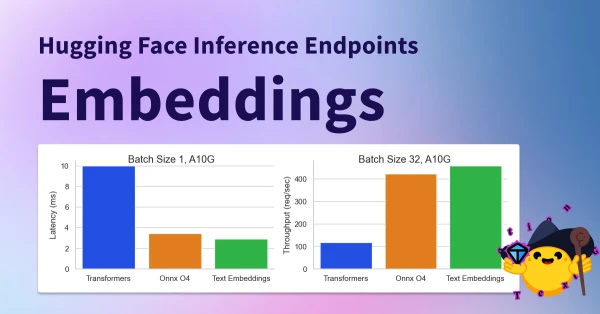

Key benefits include autoscaling, low latency, and painless scaling. Users can choose from a wide range of pre-trained models, fine-tune them, and deploy with just a few clicks. The service integrates seamlessly with the Hugging Face ecosystem, including the Hub and libraries like Transformers. Pricing is pay-as-you-go, making it accessible for both small projects and enterprise use.

This move aims to reduce the complexity of deploying NLP models in production, enabling faster iteration and more robust applications.