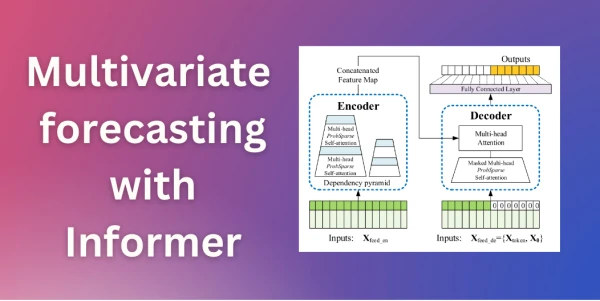

The Informer model, which won the AAAI21 best paper award, is now available in the Hugging Face Transformers library. This model addresses key limitations of the vanilla Transformer for long sequence time-series forecasting (LSTF). Specifically, it reduces the quadratic computational complexity of self-attention to O(T log T) and mitigates memory bottlenecks when stacking layers through a distilling operation.

In this post, we demonstrate how to use Informer for multivariate probabilistic forecasting—predicting the distribution of a future vector of time-series target values. The model architecture requires no change for multivariate input; it simply processes a sequence of vectors. The output distribution is modeled as independent (diagonal) emissions, which is a practical approximation for high-dimensional data.

Key Innovations

- ProbSparse Attention: Exploits the long-tail distribution of attention scores by focusing on "active" queries that contribute most to attention, reducing complexity from O(T²) to O(T log T).

- Distilling: Reduces input size between encoder/decoder layers, cutting memory usage from O(N·T²) to O(N·T log T).

The blog provides a step-by-step tutorial covering environment setup, dataset loading, model definition, transformations, training, and inference. The complete code is available in a Colab notebook.

Informer represents a significant step forward for long-horizon forecasting tasks where traditional transformers struggle with computational efficiency.