For all their amazing performance, state-of-the-art deep learning models often take a long time to train. To accelerate training, engineering teams rely on distributed training—a divide-and-conquer approach where clustered servers each keep a copy of the model, train it on a subset of the data, and exchange results to converge on a final model.

Graphical Processing Units (GPUs) have long been the go-to choice for deep learning. However, the rise of transfer learning is changing the landscape. Models are now rarely trained from scratch on massive datasets. Instead, they are frequently fine-tuned on smaller, task-specific datasets. These shorter training jobs make CPU-based clusters an attractive option, balancing training time and cost.

What This Post Is About

In this guide, you will learn how to accelerate PyTorch training by distributing it across a cluster of Intel Xeon Scalable CPU servers, powered by the Ice Lake architecture and optimized software libraries. We'll build the cluster from scratch using virtual machines, and you can easily replicate the setup on your own infrastructure, either in the cloud or on-premise.

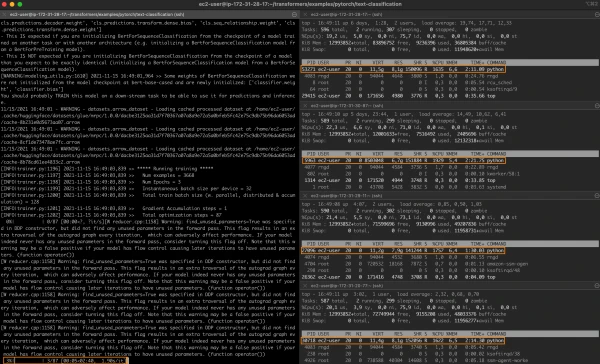

We'll fine-tune a BERT model on the MRPC dataset (from the GLUE benchmark) for text classification. The MRPC dataset contains 5,800 sentence pairs from news sources, labeled for semantic equivalence. Once the cluster is ready, we'll run a baseline job on a single server, then scale to 2 and 4 servers to measure speedup.

Key topics covered:

- Required infrastructure and software building blocks

- Cluster setup

- Installing dependencies

- Running single-node and distributed jobs

Using Intel Servers

For best performance, we use Intel servers based on the Ice Lake architecture, which supports hardware features like Intel AVX-512 and Intel Vector Neural Network Instructions (VNNI). These accelerate deep learning training and inference operations.

All major cloud providers offer Ice Lake-based virtual machines:

- Amazon Web Services: EC2 M6i and C6i instances.

- Azure: Dv5/Dsv5-series, Ddv5/Ddsv5-series, and Edv5/Edsv5-series.

- Google Cloud Platform: N2 Compute Engine VMs.

You can also use your own servers based on the Cascade Lake architecture (Ice Lake's predecessor), which also includes AVX-512 and VNNI.

Using Intel Performance Libraries

Intel has designed the Intel extension for PyTorch to leverage AVX-512 and VNNI. This library provides out-of-the-box speedup for training and inference.

For distributed training, networking is often the bottleneck, as nodes exchange model state information. The Intel oneAPI Collective Communications Library (oneCCL) implements efficient communication patterns like all-reduce, optimized for deep learning. We'll use oneCCL as the backend for PyTorch's torch.distributed package.

Setting Up Our Cluster

In this demo, we use Amazon EC2 instances running Amazon Linux 2 (c6i.16xlarge, 64 vCPUs, 128GB RAM, 25 Gbit/s networking). We'll need four identical instances. To simplify, we set up one instance manually, create an Amazon Machine Image (AMI) from it, and launch three identical instances from that AMI.

Networking requirements:

- Open port 22 for SSH access on all instances.

- Configure password-less SSH between the master instance and workers.