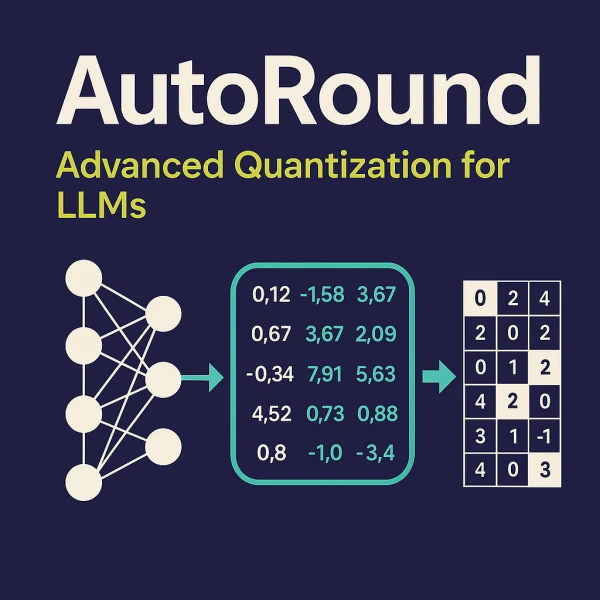

Intel has unveiled AutoRound, a new quantization method designed to compress large language models (LLMs) and vision-language models (VLMs) without sacrificing accuracy. The technique, which is part of Intel's Neural Compressor toolkit, automatically determines the optimal bit-width and rounding for each layer, slashing model size and inference time.

"AutoRound enables efficient deployment of LLMs and VLMs on Intel hardware with minimal performance degradation," the company stated in a blog post.

Traditional quantization often uses uniform rounding, but AutoRound employs a sign‑adaptive rounding scheme that preserves more information. The method supports Intel's own processors as well as other x86 platforms, making it a versatile option for AI practitioners.

In tests on models like OPT‑6.7B and LLaMA‑2‑13B, AutoRound achieved near‑lossless compression at 4‑bit precision, reducing memory footprint by up to 4x while maintaining over 99% of the original model's accuracy on benchmarks.

Intel expects AutoRound to accelerate adoption of LLMs in edge and data‑center environments where memory and compute resources are constrained.