Dreambooth is a powerful technique for teaching new concepts to Stable Diffusion through specialized fine-tuning. However, selecting the right hyperparameters can be challenging, and overfitting is a common pitfall. After extensive experimentation, we present our findings and recommendations for optimal results.

TL;DR: Recommended Settings

- Dreambooth overfits quickly. Use a low learning rate and gradually increase training steps until quality is satisfactory.

- For faces, 800-1200 steps with a batch size of 2 and learning rate of 1e-6 work well.

- Prior preservation is crucial for faces to avoid overfitting; for other subjects, its impact is minimal.

- If images are noisy or degraded, suspect overfitting. Use the DDIM scheduler or more inference steps (~100).

- Fine-tuning the text encoder alongside the UNet significantly improves quality but requires more memory (ideally 24 GB GPU). Use 8-bit Adam, fp16, or gradient accumulation for 16 GB GPUs.

- EMA doesn't notably improve results.

- The token

sksis not necessary; choose descriptive terms for your subject.

Learning Rate Impact

Dreambooth overfits quickly. In all experiments, lower learning rates yielded better results. Tune both learning rate and steps for your dataset.

Experiments Settings

We used the train_dreambooth.py script with AdamW on 2x 40GB A100s. For objects, we trained with batch size 4 for 400 steps at high (5e-6) and low (2e-6) learning rates. For faces, we used prior preservation, batch size 2, 800-1200 steps, and the same learning rates.

Cat Toy

High LR resulted in overfitting, while low LR preserved the object's identity better.

Pighead

High LR produced color artifacts (reducible with more inference steps), while low LR gave cleaner outputs.

Mr. Potato Head

Similar pattern: High LR led to artifacts; low LR maintained quality.

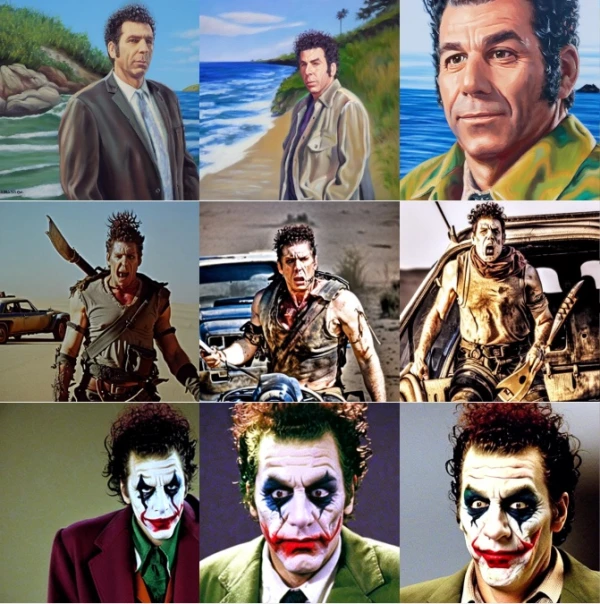

Human Face

Training on faces required more steps and prior preservation. Even then, results were not perfect, highlighting the difficulty of adding human subjects.

Using Prior Preservation for Faces

Prior preservation helps prevent overfitting when training on faces. It uses images from the base model to retain prior knowledge.

Effect of Schedulers

If overfitting causes noise, switch to DDIM scheduler or increase inference steps to around 100.

Fine-tuning the Text Encoder

This greatly improves quality, especially for faces. It requires more memory but is manageable with gradient accumulation and fp16.

Epilogue: Textual Inversion + Dreambooth

Combining Textual Inversion with Dreambooth can yield even better results, as it allows learning a new token embedding alongside fine-tuning.