Few-shot learning is transforming natural language processing by enabling models to make accurate predictions with minimal labeled data. This technique, which relies on large language models like GPT-Neo, allows users to provide just a few examples at inference time instead of requiring extensive fine-tuning datasets.

Understanding Few-Shot Learning

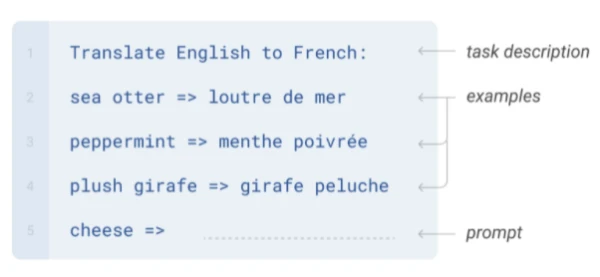

Few-shot learning involves feeding a model a small number of examples to guide its output. In NLP, models like GPT-Neo and GPT-3 leverage their pre-training on vast text corpora to generalize to new tasks. A typical few-shot prompt includes a task description, a few examples, and a prompt for the model to complete.

What is GPT-Neo?

GPT-Neo, developed by EleutherAI, is an open-source family of transformer models based on the GPT architecture. These models are trained on the Pile dataset, making them well-suited for tasks that align with that dataset's distribution.

Using the Accelerated Inference API

Hugging Face's Accelerated Inference API offers a simple way to deploy GPT-Neo for few-shot learning. Below is a Python code snippet to get started:

import json

import requests

API_TOKEN = ""

def query(payload='', parameters=None, options={'use_cache': False}):

API_URL = "https://api-inference.huggingface.co/models/EleutherAI/gpt-neo-2.7B"

headers = {"Authorization": f"Bearer {API_TOKEN}"}

body = {"inputs": payload, 'parameters': parameters, 'options': options}

response = requests.request("POST", API_URL, headers=headers, data=json.dumps(body))

try:

response.raise_for_status()

except requests.exceptions.HTTPError:

return "Error:" + " ".join(response.json()['error'])

else:

return response.json()[0]['generated_text']

parameters = {

'max_new_tokens': 25,

'temperature': 0.5,

'end_sequence': "###"

}

prompt = "...." # Your few-shot prompt

data = query(prompt, parameters, options)

Practical Tips for Best Results

- GPT-Neo (2.7B) is 60x smaller than GPT-3, so it requires 3-4 examples for good performance.

- Adjust hyperparameters like

temperature(lower for less randomness) andend_sequenceto control generation. - Example quality matters: well-crafted prompts yield better predictions.

Responsible Use

Always consider the ethical implications of generated text. Avoid biased or harmful content, and ensure transparency about AI-generated outputs.