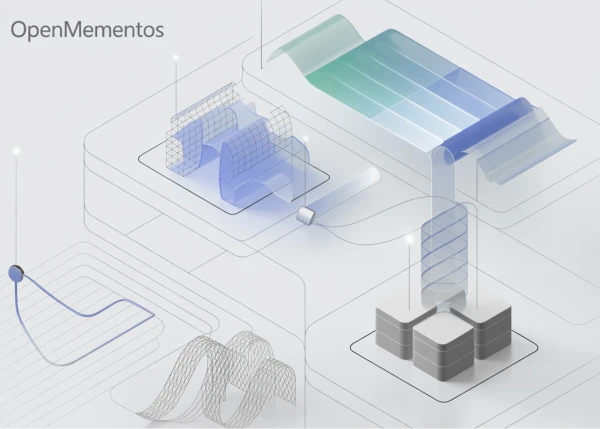

In this practical tutorial, we dive into Microsoft's OpenMementos dataset, exploring how reasoning traces are structured through blocks and mementos in a Colab-ready workflow. We stream the dataset efficiently, parse its special-token format, inspect the organization of reasoning and summaries, and measure the compression provided by the memento representation across different domains.

As we progress, we visualize dataset patterns, align the streamed format with the richer full subset, simulate inference-time compression, and prepare the data for supervised fine-tuning. This builds both an intuitive and technical understanding of how OpenMementos captures long-form reasoning while preserving compact summaries that support efficient training and inference.

Setting Up the Environment

We start by installing the required libraries and importing core tools for dataset streaming, parsing, analysis, and visualization. Then we connect to the dataset in streaming mode to inspect it without downloading the entire dataset locally. By reading the first example, we begin understanding the dataset schema, the problem format, and the domain and source metadata attached to each reasoning trace.

!pip install -q -U datasets transformers matplotlib pandas

import re, itertools, textwrap

from collections import Counter

from typing import Dict

import pandas as pd

import matplotlib.pyplot as plt

from datasets import load_dataset

DATASET = "microsoft/OpenMementos"

ds_stream = load_dataset(DATASET, split="train", streaming=True)

first_row = next(iter(ds_stream))

print("Columns :", list(first_row.keys()))

print("Domain :", first_row["domain"], "| Source:", first_row["source"])

print("Problem head:", first_row["problem"][:160].replace("\n", " "), "...")

Parsing Reasoning Traces

We define a regex-based parser that extracts reasoning blocks, memento summaries, the main thinking section, and the final answer from each response. We test the parser on the first streamed example and confirm that the block-summary structure is being captured correctly.

BLOCK_RE = re.compile(r"<\|block_start\|>(.*?)<\|block_end\|>", re.DOTALL)

SUMMARY_RE = re.compile(r"<\|summary_start\|>(.*?)<\|summary_end\|>", re.DOTALL)

THINK_RE = re.compile(r"<think>(.*?)</think>", re.DOTALL)

def parse_memento(response: str) -> Dict:

blocks = [m.strip() for m in BLOCK_RE.findall(response)]

summaries = [m.strip() for m in SUMMARY_RE.findall(response)]

think_m = THINK_RE.search(response)

final_ans = response.split("</think>")[-1].strip() if "</think>" in response else ""

return {"blocks": blocks, "summaries": summaries,

"reasoning": (think_m.group(1) if think_m else ""),

"final_answer": final_ans}

parsed = parse_memento(first_row["response"])

print(f"\n→ {len(parsed['blocks'])} blocks, {len(parsed['summaries'])} mementos parsed")

print("First block :", parsed["blocks"][0][:140].replace("\n", " "), "...")

print("First memento :", parsed["summaries"][0][:140].replace("\n", " "), "...")

Analyzing Compression Across Domains

We run a streaming analysis over multiple samples to compute block counts, word counts, character counts, and compression ratios, which helps us study how the dataset behaves across examples and domains.

N_SAMPLES = 500

rows = []

for i, ex in enumerate(itertools.islice(

load_dataset(DATASET, split="train", streaming=True), N_SAMPLES)):

p = parse_memento(ex["response"])

if not p["blocks"] or len(p["blocks"]) != len(p["summaries"]):

continue

blk_c = sum(len(b) for b in p["blocks"])

sum_c = sum(len(s) for s in p["summaries"])

blk_w = sum(len(b.split()) for b in p["blocks"])

sum_w = sum(len(s.split()) for s in p["summaries"])

rows.append(dict(domain=ex["domain"], source=ex["source"],

n_blocks=len(p["blocks"]),

block_chars=blk_c, summ_chars=sum_c,

block_words=blk_w, summ_words=sum_w,

compress_char=sum_c / max(blk_c, 1),

compress_word=sum_w / max(blk_w, 1)))

if (i + 1) % 100 == 0:

print(f" processed {i+1}/{N_SAMPLES}")

df = pd.DataFrame(rows)

print(f"\nAnalyzed {len(df)} rows. Domain counts:")

print(df["domain"].value_counts().to_string())

per_dom = df.groupby("domain").agg(

n=("domain", "count"),

median_blocks=("n_blocks", "median"),

median_block_words=("block_words", "median"),

median_summ_words=("summ_words", "median"),

median_char_ratio=("compress_char", "median"),

median_word_ratio=("compress_word", "median"),

).round(3)

print("\nPer-domain medians (ratio = mementos / blocks):")

print(per_dom.to_string())

Simulating Inference-Time Compression

We implement a function to compress a trace by keeping only the last few blocks in full and replacing earlier blocks with their memento summaries.

def compress_trace(response: str, keep_last_k: int = 1) -> str:

blocks, summaries = BLOCK_RE.findall(response), SUMMARY_RE.findall(response)

if not blocks or len(blocks) != len(summaries):

return response

out, n = ["<think>"], len(blocks)

for i, (b, s) in enumerate(zip(blocks, summaries)):

if i >= n - keep_last_k:

out.append(f"<|block_start|>{b}<|block_end|>")

out.append(f"<|summary_start|>{s}<|summary_end|>")

else:

out.append(f"<|summary_start|>{s}<|summary_end|>")

out.append("</think>")

out.append(response.split("</think>")[-1])

return "\n".join(out)

orig, comp = first_row["response"], compress_trace(first_row["response"], 1)

print(f"\nOriginal : {len(orig):>8,} chars")

print(f"Compressed : {len(comp):>8,} chars ({len(comp)/len(orig)*100:.1f}% of original)")

We also measure token-level compression using a tokenizer.

from transformers import AutoTokenizer

tok = AutoTokenizer.from_pretrained("gpt2")

MEM_TOKENS = ["<|block_start|>", "<|block_end|>",

"<|summary_start|>", "<|summary_end|>",

"<think>", "</think>"]

tok.add_special_tokens({"additional_special_tokens": MEM_TOKENS})

def tlen(s): return len(tok(s, add_special_tokens=False).input_ids)

blk_tok = sum(tlen(b) for b in parsed["blocks"])

sum_tok = sum(tlen(s) for s in parsed["summaries"])

print(f"\nTrace-level token compression for this example:")

print(f" block tokens = {blk_tok}")

print(f" memento tokens = {sum_tok}")

print(f" compression = {blk_tok / max(sum_tok,1):.2f}× (paper reports ~6×)")

Preparing Data for Supervised Fine-Tuning

Finally, we format the dataset into a chat structure suitable for fine-tuning.

def to_chat(ex):

return {"messages": [

{"role": "user", "content": ex["problem"]},

{"role": "assistant", "content": ex["response"]},

]}

chat_stream = load_dataset(DATASET, split="train", streaming=True).map(to_chat)

chat_ex = next(iter(chat_stream))

print("\nSFT chat example (truncated):")

for m in chat_ex["messages"]:

print(f" [{m['role']:9s}] {m['content'][:130].replace(chr(10),' ')}...")

This tutorial provides a complete pipeline for working with OpenMementos, from parsing and analysis to compression and fine-tuning data preparation. By the end, you should have a solid understanding of how to leverage this dataset for efficient training and inference.