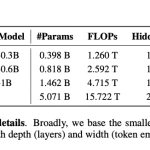

Meta AI has released Sapiens2, a second-generation foundation model designed to tackle the challenges of human-centric computer vision. The model is trained on a new dataset of 1 billion human images and comes in sizes ranging from 0.4B to 5B parameters, operating at native 1K resolution with support for up to 4K.

Sapiens2 builds on its predecessor by combining masked autoencoder (MAE) pretraining with contrastive learning. MAE helps the model learn fine spatial details by reconstructing masked image patches, while contrastive learning improves semantic understanding. This hybrid approach avoids the "representation drift" that can occur when contrastive methods strip away appearance cues needed for tasks like albedo estimation.

The model handles multiple tasks simultaneously, including pose estimation, segmentation, normal estimation, pointmap generation, and albedo recovery. Meta reports significant improvements over the original Sapiens across all benchmarks, particularly in fine-grained human understanding.

Sapiens2 is open-source, with weights available for research and development. The team believes this model will advance applications in motion capture, augmented reality, and digital human creation.