Video foundation models can generate stunning frames but often fail to maintain consistency across scenes. For example, when navigating through a corridor in models like Wan 2.1 or CogVideoX, walls may warp, objects morph, and details disappear. This reveals a core limitation: these models are fitting 2D pixel correlations rather than simulating a coherent 3D scene.

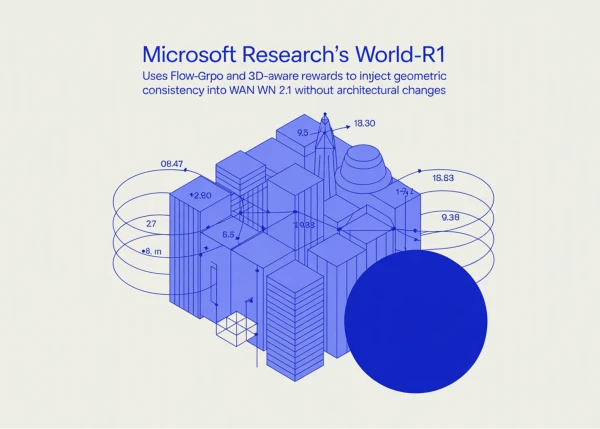

To address this, a team from Microsoft Research and Zhejiang University developed World-R1, a framework that aligns video generation with 3D constraints using reinforcement learning. The key insight is that video foundation models already encode rich 3D information internally. Rather than supervising the model with expensive 3D assets, World-R1 elicits this latent knowledge through post-training with rewards derived from pre-trained 3D models and a vision-language critic. The underlying architecture remains untouched, and inference costs stay unchanged.

Two variants are released: World-R1-Small (built on Wan2.1-T2V-1.3B) and World-R1-Large (built on Wan2.1-T2V-14B).

Flow-GRPO on Flow-Matching Video Models

World-R1 uses Flow-GRPO-Fast, an adaptation of the GRPO algorithm for flow-matching diffusion models. It converts the deterministic ODE sampler into a reverse-time SDE to introduce stochasticity for advantage estimation, then optimizes a clipped GRPO surrogate with KL regularization. The Fast variant adds SDE noise only at randomly selected intermediate steps to reduce rollout costs.

Training is conducted at 832×480 resolution on 48 NVIDIA H200 GPUs for the Small model and 96 H200s for the Large model, with a GRPO group size of G=8 across 48 parallel groups.

The 3D-Aware Reward

The reward function is the core innovation. For each generated video, the system reconstructs a 3D Gaussian Splatting representation using Depth Anything 3 and estimates the camera trajectory. The composite 3D reward is: R₃D = S_meta + S_recon + S_traj.

- S_meta renders the scene from a meta-view (a camera pose offset from the generation trajectory) and uses Qwen3-VL to score the reconstruction from 0–9 as a “3D vision expert,” penalizing artifacts like floaters, billboards, and texture stretching that look good from the original viewpoint but fail off-axis.

- S_recon re-renders the scene along the estimated trajectory and compares against the original video via 1 − LPIPS.

- S_traj measures the deviation between the requested and recovered trajectories using L2 distance for translation and geodesic distance for rotation, wrapped in a negative exponential.