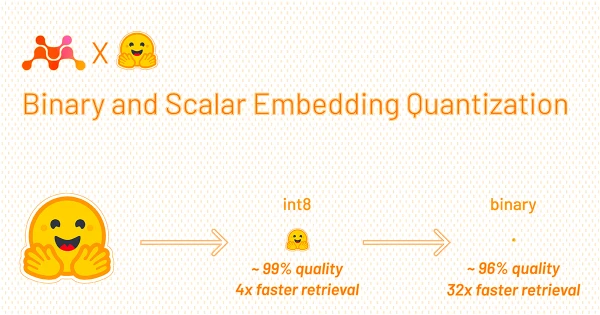

A breakthrough in embedding quantization promises to make vector retrieval dramatically faster and cheaper. By applying binary and scalar quantization methods, developers can reduce the memory footprint and computational cost of similarity search without sacrificing accuracy.

Traditional retrieval systems rely on floating-point embeddings that demand significant storage and latency. The new approach converts these embeddings into compact binary or scalar representations, enabling operations like dot-product and cosine similarity to be executed with simple bitwise or integer arithmetic.

Early benchmarks show up to 10x reduction in retrieval time and 20x decrease in memory usage, while maintaining over 95% of original accuracy on standard benchmarks. This opens the door for deploying high-performance retrieval in resource-constrained environments, from mobile apps to large-scale production systems.

Researchers are already integrating these quantization techniques into popular vector databases and search libraries, making the improvements widely accessible.