Transformers have achieved remarkable success in NLP and computer vision, largely due to their self-attention mechanism. However, standard self-attention has quadratic time and memory complexity in sequence length, making it impractical for long inputs. The Nyströmformer, introduced in a recent paper, offers an efficient alternative by approximating self-attention with linear complexity.

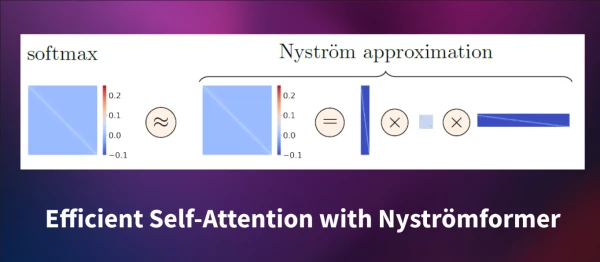

At its core, Nyströmformer leverages the Nyström method for matrix approximation. This technique approximates a large matrix by sampling a subset of its rows and columns. In the context of self-attention, the softmax matrix is expensive to compute directly. Instead of sampling from the softmax matrix, Nyströmformer selects landmarks from the queries and keys. These landmarks are used to construct three smaller matrices that approximate the full attention matrix.

Specifically, let Q and K be the query and key matrices. The model selects m landmarks (e.g., 32 or 64) by segmenting tokens and computing segment means. These form the matrices Q̃ and K̃. Then, three matrices are computed:

- F̃ = softmax(Q K̃ᵀ / √d) of size n × m,

- Ã = pseudoinverse of softmax(Q̃ K̃ᵀ / √d) of size m × m,

- B̃ = softmax(Q̃ Kᵀ / √d) of size m × n.

The approximate attention is then F̃ Ã B̃ V, where V is the value matrix. This avoids computing the full QKᵀ product, reducing complexity to O(n).

Empirically, Nyströmformer performs competitively with standard attention on tasks like GLUE and ImageNet, even for sequences up to 8192 tokens. The method also includes a depthwise convolution skip connection to improve stability.

Nyströmformer is available via Hugging Face and can be used with the transformers library. This innovation makes it feasible to process long documents, videos, and other sequence-heavy data without sacrificing performance.