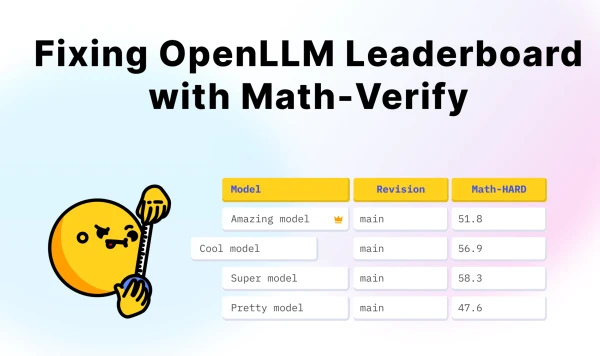

The Open LLM Leaderboard, a widely used platform for evaluating large language models, has integrated a new verification tool called Math-Verify to improve the accuracy of its benchmarks. This update addresses long-standing concerns about how model outputs are scored, particularly for mathematical reasoning tasks.

Math-Verify works by parsing and validating model responses against ground truth answers, reducing errors from formatting inconsistencies or partial matches. The leaderboard maintainers hope this will provide a more reliable comparison of model performance.

Developers of the tool have released it as open-source, allowing the AI community to inspect and contribute to the verification logic. Early tests show that Math-Verify can catch subtle errors that previous scoring methods missed, potentially shuffling the ranking of some models.

This move reflects the broader push for transparency and rigor in AI evaluation, as benchmarks play a critical role in guiding research and deployment decisions.