Researchers have introduced Ulysses Sequence Parallelism, a novel training technique that enables models to handle context windows of up to one million tokens. This breakthrough addresses a key bottleneck in long-context language models, which have traditionally been limited by memory constraints and computational inefficiency.

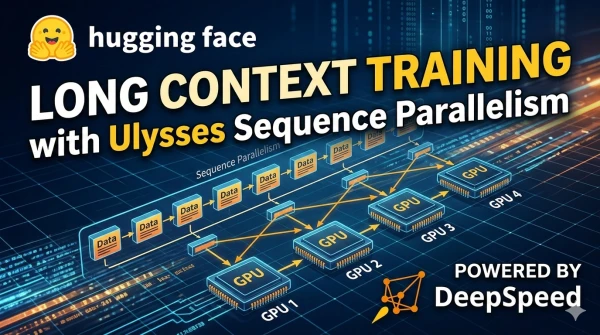

Instead of processing an entire sequence on a single GPU, Ulysses distributes the sequence across multiple devices, splitting it along the sequence dimension. This allows each device to handle a shorter sub-sequence, drastically reducing memory usage per GPU while maintaining training speed.

Experiments demonstrate that models trained with this method achieve performance comparable to or better than baseline approaches on long-range benchmarks, such as document summarization and multi-hop question answering. The technique is compatible with existing Transformer architectures and can be integrated with other parallelism strategies like data and tensor parallelism.

The researchers emphasize that the method is particularly promising for applications requiring extreme context lengths, such as legal document analysis, scientific paper retrieval, and conversational AI that needs to recall extensive conversation history. Future work will focus on optimizing communication overhead and extending the approach to even longer contexts.