Large language models (LLMs) have revolutionized machine learning with their ability to process unstructured data, but their size often demands expensive high-end GPUs. Now, a new quantization technique called SmoothQuant enables these models to run efficiently on Intel CPUs, cutting costs without sacrificing accuracy.

Quantization reduces model size by converting 16-bit floating-point parameters to 8-bit integers, shrinking models by at least 2x. However, LLMs suffer from outlier activation channels that degrade quality under standard quantization. SmoothQuant addresses this by applying a mathematical transformation to both weights and activations, making LLMs 'quantization-friendly' for INT8 precision.

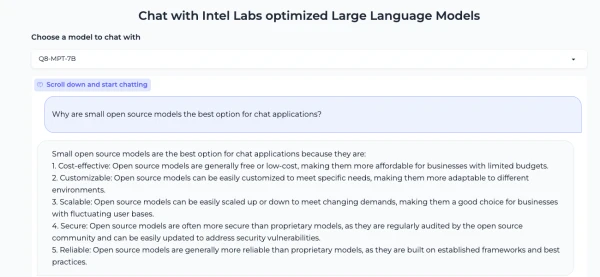

Intel has quantized several popular LLMs—including OPT, LLaMA, Alpaca, Vicuna, BloomZ, and MPT-7B—using SmoothQuant. Results show that models like OPT maintain or even improve accuracy on most benchmarks while running significantly faster. For example, the MPT-7B-chat model generates text in real time on a single Intel Sapphire Rapids CPU core.

This breakthrough makes state-of-the-art LLMs accessible to organizations without GPU clusters, democratizing AI for low-latency applications like search and chatbots.