Researchers have released a novel open-weight language model trained exclusively on English text from before 1931, aiming to study historical reasoning and generalization without modern data contamination.

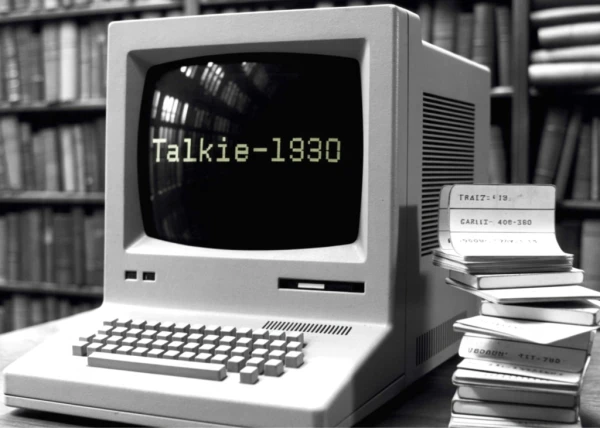

The model, named talkie-1930-13b-base, has 13 billion parameters and was trained on 260 billion tokens from pre-1931 books, newspapers, journals, patents, and legal texts. Its knowledge cutoff is December 31, 1930—the date after which works enter the public domain in the United States, making the training data legally accessible.

The team includes Nick Levine, David Duvenaud, and Alec Radford. They have also released an instruction-tuned version, talkie-1930-13b-it, along with a live demo at talkie-lm.com where users can interact with the model.

According to the researchers, this "vintage language model" approach offers several advantages:

-

Contamination-free evaluation: Since the model has never seen modern data, it provides a clean baseline for testing generalization on contemporary benchmarks. For example, the team tested whether the model could learn Python despite never having seen programming language examples.

-

Historical reasoning: The model can analyze historical documents, literature, and scientific works from the early 20th century without the bias of modern knowledge.

-

Research transparency: By using only public domain texts, the training data is fully auditable and can be replicated by other teams.

The project is non-profit and open-weight, meaning the model parameters are publicly available for research. However, the team notes that the model lacks knowledge of events after 1930, such as World War II or the internet, which may limit its practical applications.