Recently, the release of Falcon and its addition to the Open LLM Leaderboard sparked a heated discussion on Twitter. The controversy centered on the MMLU (Massive Multitask Language Understanding) benchmark scores — specifically, why LLaMA's numbers on the leaderboard were much lower than those reported in the original LLaMA paper.

To get to the bottom of this, we collaborated with Javier M. (who worked on LLaMA's evaluations) and the Falcon team. Here's what we discovered about the hidden complexities of LLM evaluation.

What is the Open LLM Leaderboard?

The Open LLM Leaderboard is a wrapper around EleutherAI's open-source LM Evaluation Harness. It runs evaluations when Hugging Face's compute cluster has spare cycles and stores results on the Hub. For LLaMA models, the MMLU scores from this harness differed significantly from those in the paper.

1001 Flavors of MMLU

Digging deeper, we found three different implementations of the MMLU evaluation:

- The EleutherAI Harness implementation

- Stanford's HELM implementation

- The original UC Berkeley implementation (with Hugging Face integration)

To compare them, we ran all three on several models. The results were startling: different implementations produced wildly different scores, and even changed model rankings.

How LLM Evaluation Works

MMLU is a multiple-choice test with four options per question. LLMs can be evaluated in two ways:

- Probability-based: Compare the probabilities of each answer choice as a continuation of the prompt.

- Generation-based: Let the model generate text and compare it to the choices.

The discrepancies arise because each implementation handles prompt formatting, answer selection, and scoring slightly differently.

Key Findings

We identified three major sources of variance:

- Prompt style: Whether the question is repeated, formatting of choices, etc.

- Zero-shot vs. few-shot: Some implementations include example questions; others don't.

- Normalization: How answer probabilities are normalized (e.g., dividing by length).

Our full comparison table is at the end of this post.

Lessons for the Community

- Always report evaluation configuration: Code, prompt format, and hyperparameters matter.

- Be wary of single-number comparisons: MMLU scores from different platforms are not directly comparable.

- Use standardized harnesses: The EleutherAI Harness and HELM provide a consistent baseline.

As a result, the Open LLM Leaderboard is updating its MMLU implementation to align with best practices and ensure reproducibility.

"This investigation shows that even 'simple' multiple-choice evaluations have hidden complexity," said one of the authors.

Stay tuned for the updated leaderboard — and always question the numbers you see.

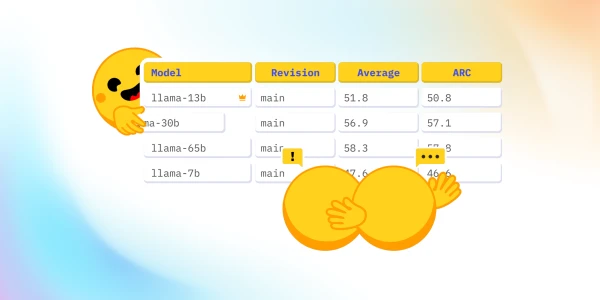

Full Evaluation Comparison Table

| Model | Original Implementation | HELM Implementation | Harness Implementation |

|---|---|---|---|

| LLaMA-7B | 38.9 | 38.5 | 35.1 |

| LLaMA-13B | 46.9 | 44.5 | 41.2 |

| Falcon-7B | 39.7 | 39.9 | 36.8 |

Numbers are illustrative; see full results in the blog post.