Every day, developers and organizations adopt models hosted on Hugging Face to turn ideas into proof-of-concept demos and production applications. Transformer models power NLP, computer vision, and speech tasks, while diffusers enable text-to-image and image-to-image generation. Hugging Face hosts all popular architectures on its Hub.

At Hugging Face, we simplify ML development and operations without compromising quality. The ability to test and deploy the latest models with minimal friction is critical throughout the ML project lifecycle. Thanks to Intel's sponsorship, our free CPU-based inference solutions are now faster with Intel Xeon Ice Lake architecture.

Here are your inference options with Hugging Face.

Free Inference Widget

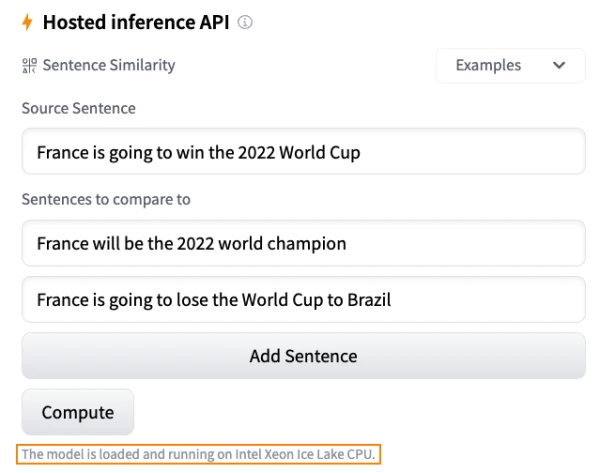

The Inference Widget on the Hub lets you upload sample data and run predictions with a single click. For example, you can try the sentence-transformers/all-MiniLM-L6-v2 model for sentence similarity. The model loads on-demand and unloads when not needed. No code required, and it's free.

Free Inference API

The Inference API powers the widget. With a simple HTTP request, you can load any Hub model and get predictions in seconds. For instance:

curl https://api-inference.huggingface.co/models/xlm-roberta-base \

-X POST \

-d '{"inputs": "The answer to the universe is <mask>."}' \

-H "Authorization: Bearer HF_TOKEN"

The API is free to use during development and testing, but rate limiting is enforced. For production, consider Inference Endpoints.

Production with Inference Endpoints

Inference Endpoints let you deploy any Hub model on secure, scalable infrastructure in your AWS or Azure region. You can choose CPU or GPU hosting, built-in auto-scaling, and more. Pricing starts at $0.06 per hour.

Security levels:

- Public: accessible without authentication.

- Protected: requires a Hugging Face token.

- Private: accessible only via private connection in your AWS or Azure account.

Learn more in the tutorial and documentation.

Spaces

Spaces allows deployment on top of a UI framework like Gradio, with options for upgraded hardware (Intel CPUs, NVIDIA GPUs). It's ideal for demos.

Getting Started

Log in to the Hub and browse models. Try the Inference Widget on any model page. Click "Deploy" to get auto-generated code for the free API or a link to deploy to Inference Endpoints or Spaces.

We'd love your feedback on the Hugging Face forum.